Plotting a Square in Perspective

[Stop Animation]

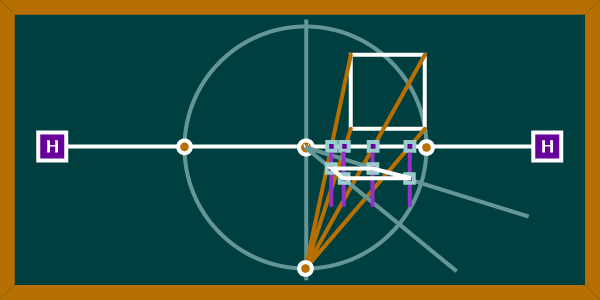

Much of the creation of spaces in linear perspective relies on the establishment of a basic unit-the square. A square can be the basis for the construction of a grid, circular forms, and the establishment of inclines. This diagram illustrates a reliable method for approximating a view of a square drawn in linear perspective.

Steps for Making a Square

- In order to faithfully reproduce anything in linear perspective it is important to establish a cone of vision. We have done this in the diagram by creating a perfect circle whose center is the vanishing point. A larger cone of vision works better and helps to eliminate distortions.

- We should also establish a vertical axis. This axis will help us to establish placement of the perspective square in the space.

- Next we can place a square somewhere adjacent to the circle of vision. It can be placed anywhere, but its placement will dictate where the perspective square will fall. If the square is close to the horizon line, then the perspective square will be smaller and further back in the space. If further from the horizon line, then it will be larger and closer to the viewer. It should always be inside or at least touching the cone of vision. If it is placed below the horizon, the perspective square will be drawn above and vice versa.

- Now draw lines from each of the four corners of the square to either top or bottom of the axis line where it intersects the cone of vision. The establishment of the point will depend on whether your square is placed above or below the horizon line. Remember that the drawn square will be drawn in the opposite semi-circle.

- At the points where the lines intersect the horizon line, lines will be drawn vertially or perpendicular to the horizon line. This is illustrated with four purple lines that drop vertically from those four intersections.

- Now the square will begin taking shape when you draw two convergences from the vanishing point. These two convergences should extend out past the boundary of the circle. At the points where these convergences intersect the verticals you will establish the perspective square.

- You can check your accuracy by drawing construction lines from the edges of the circle on the horizon through the rear corners of the plotted perspective square. The lines should also intersect with the front corners.

This should establish a convincing square that appears to recede into space. To check your work, make sure that the diagonal lines which emanate from the edges of the cone of vision intersect the corners of the square. Also check to make sure that the right and left edges recede back to the vanishing point. You can also check a square that you have approximated by reversing this process. I also recommend good observation of a real space to compliment this process.